Demystifying Autonomous Driving

At the 25th World Wide Web conference in Montreal last month, Yoshua Bengio showed slides that demonstrated how today’s computer vision algorithms achieve superhuman accuracy. Additionally, he presented a video from NVIDIA. NVIDIA, which is better known for designing graphics processing units for the gaming market, presented a car which drove autonomously - after driving only 3,000 miles in one month of training. Watch the following video from minutes 1:40 to 3:00:

This great success is based on deep neural networks and reinforcement learning. Motivated by the talk and this video, I thought it was time to demystify both of these, given the big improvements in these technologies.

So, what are deep neural networks and reinforcement learning? How is it now possible to train a car to drive autonomously within one month while car manufacturers tried to achieve this for several years without success?

The big challenge for autonomous driving

For years the automotive industry had all the mechanical components (such as lane assist and cruise control) in place to drive fully autonomously, that is, accelerate, brake and steer the car without human control and intervention. But one very important non-mechanical component was always missing, namely, the one for finding the car’s position and self-locate the vehicle robustly and with sufficient accuracy.

Car manufacturers such as Volkswagen tried to solve the problem of positioning and self-locating by using technologies such as the Global Positioning System (GPS) or differential GPS (DGPS) and map data. During my time at Volkswagen, I set up vehicle-based and backend systems for GPS and DGPS to leverage their signals for accurate positioning. GPS is a space-based navigation system that provides location and time information in all weather conditions, anywhere on or near the earth wherever there is an unobstructed line of sight to four or more GPS satellites. DGPS is an enhancement to GPS that provides improved location accuracy, from the 15-meter nominal GPS accuracy to about 10 centimeters with best possible implementations. DGPS works by broadcasting a reference signal stemming from a network of fixed, ground-based reference stations. To set up and maintain such a network is very cost-intensive. This is why car manufacturers were always searching for a better solution.

Positioning accuracy down to a matter of centimeters is crucial to drive safely on motorways and in cities. An inaccuracy greater than 10 centimeters would mean that cars might take the wrong lane on a two-way road. Nevertheless, having a precise position is worthless without high-resolution map data in order to pinpoint yourself in relation to roads, lanes, traffic lights, turns and so on from that position. Otherwise, you do not know your surroundings, which may also contain dynamic obstacles such as traffic jams on motorways. This map data, therefore, has to be updated in real time if you want to rely on this information. In summary, this has been a big challenge facing car manufactures in the field of autonomous driving.

By implementing deep neural networks on their general purpose units (GPUs), NVIDIA proved that all of this is no longer necessary. All you need is visual information, machine learning and a way to train these algorithms to set them up. Solving this problem with image data is therefore a breakthrough for the automotive industry. Now, car manufacturers can start to bring their research and pre-development work for autonomous driving to their development departments, which means that in two to five years new cars will have this feature built-in.

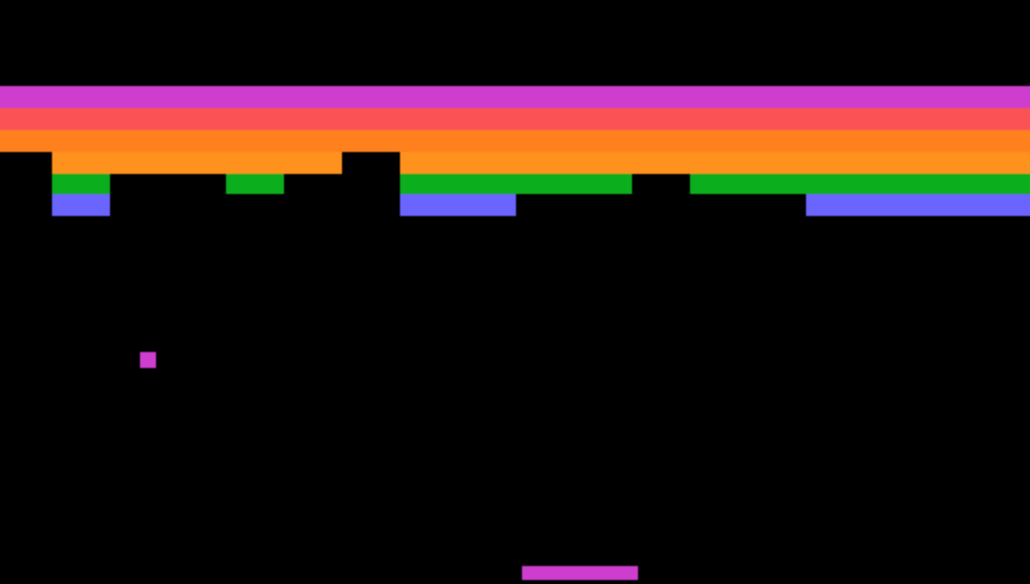

DeepMind: the evolution of deep learning

In 2010, a London-based machine learning startup called DeepMind was founded. They specialized in building general-purpose learning algorithms. Then, in 2013, they uploaded a nine page paper to Arxiv . In this paper, they presented a method which learned to play the good old Atari 2600 video games, such as Pong or Breakout, by only sampling the screen pixels and getting a reward when the game score rose. The results were astonishing. The same method learned how to play seven different games and outperformed humans in some of them. This was the first step towards general artificial intelligence, since it proved that one general method can handle the requirements of different objectives (that is, games in this case), instead of being specialized in only one, such as chess. To make this clearer: training and developing the algorithm Deep Blue, which defeated the world champion in chess, took ten years. And this algorithm was only specialized in playing chess – nothing more. Now it was proven that one single generalized method is able to play many different games with high accuracy. It’s not surprising that Google purchased the company shortly after that. In 2015, DeepMind published another paper in the journal Nature where they applied this same method to 49 other games. They achieved superhuman performance in half of them.

And how did they achieve this great success? They used deep neural networks and reinforcement learning. Let’s have a look at both techniques now, since they are the basis for solving our positioning and self-locating problem for autonomous driving.

Advantages of neural networks

The big advantage of neural networks is that they are able to represent any non-linear function. Non-linearity may sound very complicated, but it is quite easy to understand: every function where the output is not only the (weighted) sum of its inputs is a non-linear function. Daily life is full of linear and non-linear relationships. Let’s start with linear functions first. Imagine, for example, you pay somebody 10 euros per hour to wash your car. If three hours are needed, since your car is very dirty, you pay 30 euros. This is a linear relationship. Another example is that you want to bake a cake and you need one egg, 0.5 kilogram of wheat and 0.25 kilogram of sugar per person. If you want to bake this cake for 3 people, you need three times of all ingredients: 3 eggs, 1.5 kilograms of wheat and 0.75 kilograms of sugar, that is, the weighted sum of its inputs.

Most physical or social phenomena, however, are non-linear. As the name suggests, non-linear relationships are not linear, which means that doubling one variable does not cause the other to double. There are an endless variety of non-linear relationships that one can encounter. Some of them still fit into other categories, like polynomial, exponential or logarithmic relationships, and can be described by analytical functions such as the side of a square and its area. In fact, this is a quadratic (polynomial) relationship. If you double the side of a square, its area will increase four times. However, if you try to describe social phenomena such as user behavior on websites, you need high-dimensional polynomials (called splines). The same is true for creating sophisticated models for computer vision or speech recognition.

Now you understand, why non-linearity is an important aspect for creating general methods. Concerning the aforementioned Atari games, for example, the number of (potentially conflicting and so not linear) inputs and relating outputs increase with every game added to our method. And since you play all games with the same joystick using the same actions with different meanings for each game, non-linear relations are inevitable. Neural networks became quite famous in the past, since they can be applied to such complicated and diverse problems, before it was realized how hard they are to train and that they need massive amounts of data. Fortunately, both problems have been solved in the last five years, as I will go on to explain.

Deep architectures: the key to solving the training problem in deep neural networks

This section reviews the theoretical background of depth in architectures and deep neural networks. You can skip it without hurting the your understanding of the rest of this post.

Deep architectures can help solve the problem of learning target functions from sparse data. Sparse data means that you have less data than you need to train your machine learning algorithms. It was already known that an architecture with insufficient depth might require many more computational elements, potentially exponentially more (with respect to input size) than architectures whose depth is matched to the task. More precisely, functions that can be compactly represented by a depth k architecture might require an exponential number of computational elements to be represented by a depth k − 1 architecture. Since the number of computational elements one can afford depends on the number of training examples available to tune or select them, the consequences are not only computational but also statistical: poor generalization can be expected when using an insufficiently deep architecture for representing some functions. And generalization is necessary for a robust method that can be applied to a variety of problems.

Until 2006, deep architectures had not been discussed much in the machine learning literature, due to poor training and generalization errors generally obtained using the standard random initialization of the parameters. Many unreported negative observations as well as experimental results suggested that gradient-based training of deep supervised multi-layer neural networks (starting from random initialization) got stuck in “apparent local minima or plateaus,” and that as the architecture got deeper, it became more difficult to obtain good generalization.

However, researchers later discovered that much better results could be achieved when pre-training each layer with an unsupervised learning algorithm, one layer after the other, starting with the first layer. Injecting an unsupervised training signal at each layer helped to guide the parameters of that layer towards better regions in parameter space. Put simply, just as students who prepare for an exam in a specialized discipline benefit from knowing the context in advance, pre-training enables them to attain better results. They know that only a restricted number of questions or tasks will be asked of them.

The interested reader can get all the details in this excellent overview paper from Yoshua Bengio. In summary, deep architectures, such as deep neural networks, allow us to achieve better results with less data.

Introduction to reinforcement learning based on the Breakout game

Now let’s tackle the topic of reinforcement learning based on neural networks. Reinforcement learning is the key to getting feedback on actions we make. Thereby, we gain high-quality labeled data for training our network.

Consider the game Breakout. In this game, you control a movable paddle at the bottom of the screen and a layer of bricks lines the top third of the screen. A ball travels across the screen, bouncing off the top and side walls of the screen. When a brick is hit, the ball bounces away and the brick is destroyed. The player loses a turn when the ball touches the bottom of the screen. To prevent this from happening, the player has a movable paddle to bounce the ball upward, keeping it in play. Each time a brick is destroyed, your score increases – you get a reward.

You are probably wondering how this relates to autonomous driving. The answer is that from a machine learning perspective, autonomous driving is not very different from a game such as Breakout. Imagine your screen as a high-resolution camera mounted on the vehicle’s roof sampling data in your environment. The actions you can perform are steering, braking and accelerating, like the joystick actions in a game. The score, referred to as the reward in the literature, is the time the car is able to drive on its own. When the driver has to take action to correct the car, it is game over. This is actually very similar to how humans learn to drive with an instructor. The fewer interventions by the driver, the higher reward the car receives. Although the “state space” for autonomous driving has a much higher dimension and the sensory input data is noisier, from a conceptual perspective it is the same as playing a video game.

Imagine you want to teach a neural network to play Breakout. The screen images would be the input of your network. As an output you’d define three actions: right, left and firing. A traditional machine learning approach would be to set up a classification algorithm and feed in massive amounts of training data consisting of game screens as variables and the correct actions as labels (which recommend whether you should move left, right or fire). This leads, however, to the same problem mentioned before—it requires a lot of training data, which we’d have to sample beforehand, then hope covers all possible variations of the game in our data. This is obviously not how we humans learn. We don’t need somebody to tell us a million times which action to take at each screen. All we need is feedback from time to time regarding whether we chose the right action or not. Based on this, we are able to find out the rest on our own. This is what the literature calls reinforcement learning, and it is an intermediate step between supervised and unsupervised learning.

A big problem in reinforcement learning is that the labels are sparse and time-delayed, and so are the rewards, although the rewards are the most important pieces of information, necessary for learning the correct behavior. Often your current action is not responsible for the reward you receive at that moment in time. All the right steps have probably been taken before, for example, when you positioned the paddle correctly to bounce the ball back. This problem is termed the credit assignment problem, which can be solved by introducing a time-delayed quality function based on Markov decision processes (have a look at the Bellmann equation, if you are interested in more details).

Let’s start with a model for Breakout before we define one for autonomous driving in the next section. Imagine you are playing the game. The surrounding is in a certain “state”, described by the paddle’s and ball’s respective positions and direction and the remaining bricks on the screen. You are able to perform specific actions in this state such as moving the paddle left or right. These actions may result in a reward when the fired ball hits some bricks and your score increases.

Actions always have an influence on the surrounding and yield a new state with new actions, which the agent can perform now, and so on. The rule set, which defines how and when to choose the best action for a specific state, is called a policy. Your surrounding is usually stochastic, which means the next state may be somewhat random. In Breakout, for example, a newly launched ball (after losing one) goes in any random direction. Actions, states and the policy (the rules for coming from one state to another one) can be modeled as Markov decision processes, if we assume that the next state only depends on the current state, but not preceding ones. One game episode (that is, from initially launching a ball until game over) can be described by the following sequence of states, actions and rewards, where s[i] represents the state, a[i] is the action and r[i+1] is the reward after performing the action (s[n] is game over):

s[0], a[0], r[1], s[1], a[1], r[2], s[2], ... , s[n-1], a[n-1], r[n], s[n]

Given one run of the Markov decision process, the total reward can be computed for one episode:

R=r[1]+r[2]+r[3]+...+r[n]

Now, it is all about maximizing this reward as well as assumed future rewards. There are some strategies, for example, to discount future rewards, since they are uncertain, but in general it’s as simple as that. Based on this function, you have to find the action that maximizes the (discounted) future reward, which forms the essential quality function, or Q-function.

How to model autonomous driving

Regarding autonomous driving we can set up the following model: “actions” are all actions a car can do, for example, steering left or right, braking and accelerating. Steering to the left and right is the same as moving the paddle left or right. Braking and accelerating is like moving “the paddle” (that is our car) forward or reverse. The ball in the game corresponds to the car’s speed and direction. What are the bricks in the game in autonomous driving? The bricks may be the number of obstacles which the car passes without a collision. If the car drives in a city with many obstacles and is able to do this without any problems, it receives a higher score. The car’s objective is to drive as long as possible without colliding with any obstacle.

There is nothing wrong with our definition above regarding the state of the environment, but could we create a more general model? An obvious choice is the pixels recorded by your roof-mounted camera, since they contain all the important information about our environment except for the speed and direction of the car. Two consecutive images (sampling the environment with a specific frequency such as 25 frames per second) would have these covered, too. If we apply our new general model to a 1,000 x 1,000 pixel camera with 25 frames per second, take the last two seconds and convert them to grayscale with 256 levels, we would have 256^(1,000 x 1,000 x 2 x 25) states, a number larger than the number of atoms in the known universe.

This is where deep learning comes into place. Neural networks are exceptionally useful for coming up with excellent features for highly structured data. We can model our Q-function, which correlates the state and action to a reward, with a deep neural network that has the state (fifty camera images) and actions (all values of car’s movement) as input and outputs for the corresponding Q-value. In Breakout, for example, the number of actions depends on the hardware used. If you use a joystick, you have 18 actions by default (that is neutral, up, down, left, right, up-left, up-right, down-left, down-right, and also each one of those with the button pressed). In the case of a steering wheel and two pedals, one for accelerating and one for braking, you have more than 100 potential actions for each of them.

So, input to the network is fifty 1,000×1,000 grayscale images. The output of the network is a Q-value for each possible action (more than 100 for all steering wheel angles and pedal positions for braking and accelerating). The Q-values can be any real values, which lead to a traditional regression task that can be optimized with methods such as simple squared error loss. In order to perform this computation, however, you need a lot of processing units. Now you can probably understand why NVIDIA’s GPUs were necessary for training based on these huge amounts of pixels.

To be honest, there are many more tricks that are actually used to make this work – like target network, error clipping, reward clipping, etc., but these are outside the scope of this post. By now you should understand how it was possible to train a car to drive autonomously as shown in the introductory video.

Conclusion

In summary, we are currently on an amazing journey towards autonomous driving. Some years ago, nobody really believed that it was possible to solve the problem of robustly detecting and self-locating cars using only image data. Achieving superhuman accuracy in computer vision with deep architectures for neural networks and solving the problem of training them efficiently has laid the foundations for autonomous driving.